China Warns Against Autonomous AI Weapons as Military Technology Debate Intensifies

China’s military has warned the United States against allowing artificial intelligence systems to make lethal battlefield decisions, saying machines should never be given the authority to determine life and death during armed conflict. Speaking at a press briefing in Beijing, defence ministry spokesperson Jiang Bin said that unrestricted military use of artificial intelligence could weaken ethical standards and undermine accountability in warfare. Chinese officials stressed that advanced technologies must remain under meaningful human supervision and that military systems using AI should never operate independently when making decisions that could result in the loss of human life.

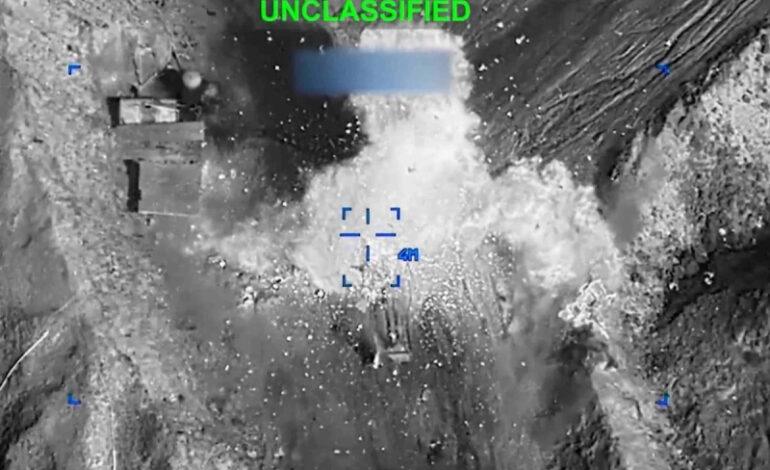

The warning comes as global debate grows over the role of artificial intelligence in modern warfare. Governments and military institutions are increasingly exploring how AI can support intelligence analysis, surveillance and battlefield decision making. Reports about the United States expanding the military use of advanced AI systems have drawn attention from policymakers and technology companies around the world. Chinese officials said allowing algorithms to control combat decisions could create dangerous scenarios in which machines influence or determine critical actions without sufficient human oversight.

China’s defence ministry said excessive reliance on AI driven weapons could lead to unpredictable outcomes and weaken traditional accountability structures in military operations. Officials argued that removing human judgment from lethal decisions could erode established norms governing warfare. They also warned that rapid development of autonomous military technologies could trigger an international arms race in artificial intelligence. Such competition could accelerate deployment of systems whose reliability and ethical implications have not been fully tested in real world conflict situations.

The comments emerged during a period of growing tension surrounding military uses of artificial intelligence and disputes between governments and technology companies over how the technology should be deployed. In the United States, disagreements between defense authorities and AI developers have intensified after some technology firms attempted to limit how their systems could be used by the military. One recent dispute involved an American AI company that resisted removing safety restrictions designed to prevent its software from being used in autonomous weapons systems or mass surveillance operations.

Chinese officials emphasized that AI should be used in ways that support security while respecting ethical boundaries. They said that human control must remain central to any weapons system that uses advanced technologies, arguing that algorithms should assist decision making rather than replace it. According to the defence ministry, maintaining human oversight is essential to ensuring that military operations follow international humanitarian principles and remain accountable under existing laws governing armed conflict.

Experts in artificial intelligence governance have raised similar concerns about the rapid militarization of AI technologies. Researchers warn that autonomous weapons powered by advanced algorithms could introduce new risks because current AI models are not always predictable or reliable in complex environments. Mistakes made by automated systems in real battlefield situations could lead to unintended escalation or civilian harm. Analysts therefore argue that international agreements or regulatory frameworks may eventually be required to establish clear limits on how AI can be used in warfare.

The debate over military AI reflects a broader competition among global powers to develop advanced technologies that can influence future security strategies. Countries including the United States and China are investing heavily in artificial intelligence research, with applications ranging from logistics and surveillance to cyber defense and autonomous systems. As these capabilities evolve, governments and technology companies are increasingly confronting ethical questions about the appropriate boundaries for machine involvement in armed conflict.

China’s latest statement underscores the growing international concern about ensuring that technological innovation does not outpace ethical and legal safeguards. By emphasizing the need for human control over AI powered weapons systems, Chinese officials signaled that debates surrounding military artificial intelligence are likely to remain a central issue in global security discussions as the technology continues to advance.