China Trains Advanced AI Models Fully on Domestic Chips

China has taken a notable step in its push toward technological self reliance as China Telecom announced the successful development of advanced artificial intelligence models trained entirely on domestically produced hardware. The state owned operator confirmed that its latest TeleChat3 models were built using a Mixture of Experts architecture and trained exclusively on AI chips developed by Huawei Technologies. The achievement represents a practical validation that complex large language models can be trained at scale without reliance on foreign semiconductors. MoE architectures have gained global attention for reducing compute intensity while maintaining performance, making them particularly relevant for countries facing hardware constraints. By demonstrating end to end training using domestic components, the project signals that Chinese developers are narrowing capability gaps in advanced AI infrastructure under increasingly restrictive global technology conditions.

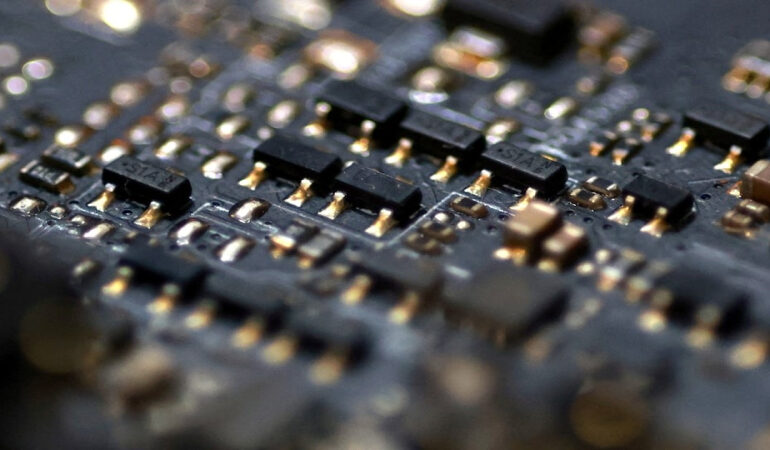

The TeleChat3 series spans model sizes from over one hundred billion parameters to systems reaching into the trillion parameter range. Training was conducted on Huawei Ascend 910B processors using MindSpore, the company’s open source deep learning framework that has been positioned as an alternative to Western AI software stacks. According to technical documentation released by China Telecom’s artificial intelligence institute, the training process achieved stable scaling performance across large clusters of domestic chips. This addresses a long standing concern within China’s AI community that homegrown processors might struggle with distributed training workloads. While benchmark comparisons with leading international models remain limited, the successful deployment suggests that domestic hardware and software ecosystems are becoming sufficiently mature for industrial scale experimentation rather than remaining confined to pilot projects.

Beyond technical significance, the development carries broader policy and market implications. China’s leadership has repeatedly emphasized the need to secure core digital infrastructure as global supply chains fragment. AI training capability sits at the center of that objective, particularly as advanced models become embedded in telecommunications, cloud services, and public sector applications. China Telecom’s position as a major state owned carrier gives the project additional weight, signaling that domestically trained models could eventually be integrated into national networks and enterprise offerings. The use of MoE architectures also reflects a strategic emphasis on efficiency, aligning with efforts to maximize output from constrained computing resources while reducing exposure to external technology shocks.

The announcement is likely to be closely watched by regulators, investors, and technology planners both inside and outside China. It underscores how sanctions and export controls are reshaping innovation pathways rather than halting progress outright. While challenges remain in software tooling, ecosystem depth, and international competitiveness, the ability to train complex AI models solely on domestic chips marks a tangible shift from aspiration to execution. As Chinese firms continue to adapt architectures and workflows to local hardware realities, the line between technological catch up and parallel development paths is becoming less clear, adding a new layer of complexity to the global AI landscape.